YouTube has expanded its AI-powered likeness detection technology to a pilot group of government officials, political candidates and journalists, allowing them to identify and request removal of unauthorized deepfake videos that resemble their appearance. The move comes as technology companies face increasing concerns about AI-generated impersonation and misinformation online. The platform said the initiative aims to protect individuals who play key roles in civic discourse while maintaining freedom of expression, including parody and satire. Participants in the program must verify their identity before using the tool, which scans uploaded videos to detect potential AI-generated impersonations and enables users to flag content through YouTube’s privacy complaint process. Discover details of the story in this news article.

- Key Takeaways: YouTube Deepfake Detection Tool Expansion

- YouTube Expands Likeness Detection Technology

- How the Deepfake Detection Tool Works

- Identity Verification Required for Participants

- Similar to YouTube’s Content ID System

- AI Deepfakes and Growing Concerns

- AI Labels and Policy Safeguards

- Development Timeline and Industry Collaboration

- Wider Policy Efforts and Future Expansion

- Truth and Awareness in the Digital Age

- FAQs on YouTube Deepfake Detection Tool Expansion

Key Takeaways: YouTube Deepfake Detection Tool Expansion

- YouTube expanded its AI likeness detection tool to government officials, journalists and political candidates.

- The technology identifies AI-generated deepfake videos that resemble a person’s appearance.

- Eligible users can review detected matches and request removal through YouTube’s privacy complaint process.

- The company said the initiative aims to protect the integrity of public discourse while preserving parody and satire.

- Participants must verify identity with a video selfie and government ID.

- The system currently focuses on facial likeness, though YouTube is also exploring voice impersonation detection.

- AI-generated videos on the platform will carry labels depending on context and sensitivity.

- YouTube plans to expand access to more officials, candidates and journalists over time.

YouTube Expands Likeness Detection Technology

YouTube on Tuesday announced it is expanding its AI likeness detection technology to a pilot group of government officials, political candidates and journalists. The system is designed to identify AI-generated deepfake videos that resemble real individuals and allow them to request removal if the content violates the platform’s policies.

The tool aims to help protect individuals who are often at the center of public debate and breaking news. According to YouTube, the expansion focuses on safeguarding identities while maintaining the platform’s longstanding approach to protecting free expression.

The company said the tool is available free of charge to participants who enroll in the pilot program. Eligible users will receive invitations from YouTube and can choose whether to participate.

Also Read: AI’s Dark Turn: Reports Expose How Child Safety Is Being Shaken by Synthetic Abuse

“This expansion is focused on protecting the integrity of public discourse,” said Leslie Miller, vice president of Government Affairs and Public Policy at YouTube, during a press briefing ahead of Tuesday’s launch.

How the Deepfake Detection Tool Works

YouTube’s likeness detection technology scans videos uploaded to the platform to identify AI-generated faces that resemble real people. When a potential match is detected, the system alerts the individual whose likeness appears to be used.

Participants can then review the flagged content and decide whether to submit a removal request through YouTube’s privacy complaint process.

However, a detection match does not automatically result in removal. Each case is reviewed under the platform’s existing privacy policies.

Key aspects of the system include:

- Scanning uploaded videos for AI-generated facial likeness

- Alerting verified participants when potential matches appear

- Allowing participants to review and request removal of flagged videos

- Applying YouTube’s privacy review process before any action is taken

YouTube emphasized that parody, satire and political commentary remain protected forms of expression on the platform.

Identity Verification Required for Participants

Participants must verify their identity before accessing the detection tool. According to YouTube, the verification process requires:

- Submission of a government-issued identification document

- A selfie or video of the participant

Once verified, users can create a profile within the system. They will then receive notifications through YouTube Studio when the platform detects videos that resemble their appearance.

Participants can review the detected matches and request removal of content they believe violates YouTube policies.

Users who have not received an invitation to participate in the program can also contact YouTube directly to request access.

Also Read: 2026 में सबसे ट्रेंडिंग AI टूल्स : तकनीक की दुनिया में क्रांति जारी

The company also clarified that the information submitted during verification will not be used to train Google’s AI models. Instead, the data will only be used to power the detection technology.

Similar to YouTube’s Content ID System

The deepfake detection system functions in a way similar to YouTube’s existing Content ID system, which identifies copyright-protected material in uploaded videos.

Content ID allows creators to detect when their copyrighted content is used on the platform. The new likeness detection tool applies a similar concept but focuses on identifying AI-generated facial likeness.

YouTube said the system could eventually evolve to include additional capabilities, including:

- Blocking uploads that violate policies before they go live

- Allowing individuals to monetize videos using their likeness

These features would mirror the structure of the Content ID system, which enables creators to monetize or control the use of their content.

AI Deepfakes and Growing Concerns

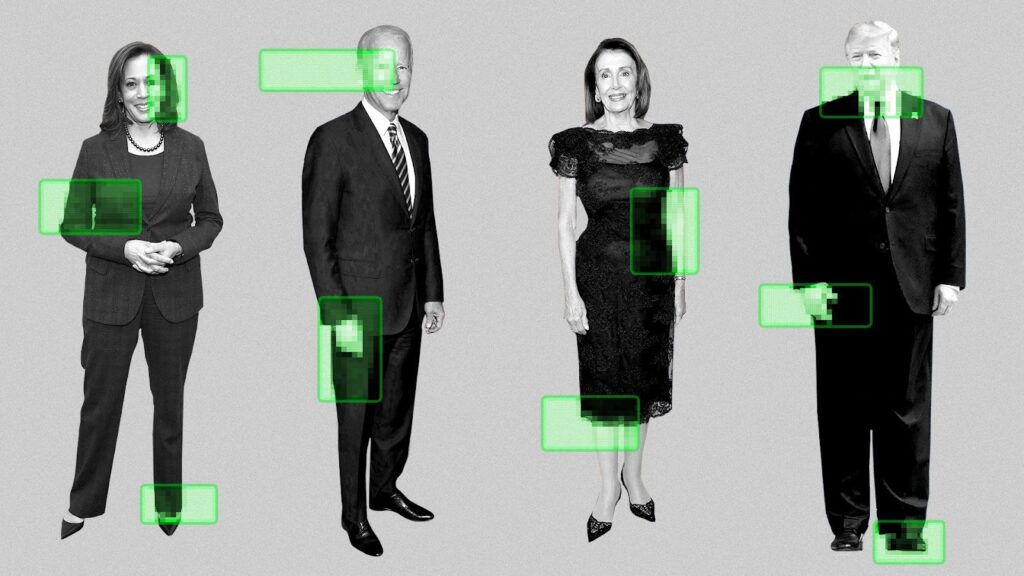

The rapid development of artificial intelligence has made it easier to create highly realistic videos that depict people saying or doing things they never actually did.

These AI-generated videos, commonly referred to as deepfakes, have raised concerns about misinformation, impersonation and scams online.

According to YouTube, such technology can be used to manipulate public perception by creating convincing videos of politicians, journalists and other influential figures.

Leslie Miller noted that the risks of AI impersonation are particularly high for individuals involved in civic discourse.

“We know that the risks of AI impersonation are particularly high for those in the civic space,” she said.

Tech companies have increasingly introduced safeguards to address these risks. YouTube CEO Neal Mohan previously identified AI transparency and protections as one of the company’s top priorities for 2026.

AI Labels and Policy Safeguards

YouTube said videos created using artificial intelligence will carry labels on the platform, though their placement may vary depending on the content.

In some cases, the label appears in the video description. For content related to more sensitive topics, the label may appear directly on the video itself.

“There’s a lot of content that’s produced with AI, but that distinction’s actually not material to the content itself,” explained Amjad Hanif, YouTube’s vice president of Creator Products.

“It could be a cartoon that is generated with AI. And so I think there’s a judgment on whether it’s a category that maybe merits a very visible disclaimer,” he said.

YouTube said the labeling approach follows its existing policies governing AI-generated content.

Development Timeline and Industry Collaboration

YouTube began developing its likeness detection technology in 2024 in collaboration with Creative Artists Agency. The company also tested the system with top creators including MrBeast and Marques Brownlee.

The platform expanded access to around four million creators in the YouTube Partner Program after initial testing.

According to Amjad Hanif, creators using the system over the past year flagged relatively few videos for removal.

“Most of it turns out to be fairly benign or additive to their overall business,” he said.

The company did not disclose which politicians or officials are part of the initial pilot group. It also declined to confirm whether figures such as President Trump are included.

Wider Policy Efforts and Future Expansion

Beyond platform safeguards, YouTube said it supports broader regulatory efforts aimed at addressing AI impersonation.

The company is backing the No Fakes Act in Washington, which seeks to regulate the use of artificial intelligence to create unauthorized replicas of a person’s voice or visual likeness.

YouTube also plans to expand the detection technology to a wider group of government officials, political candidates and journalists over time.

For now, the system focuses on identifying facial likeness, though the company is also exploring the detection of voice impersonation.

“Our goal is to get this technology into the hands of the people who need it, and we have plans to significantly expand access over the coming year,” a company spokesperson said.

Truth and Awareness in the Digital Age

The rapid spread of AI-generated content and deepfakes highlights a broader concern about authenticity and truth in the digital world. As technology evolves, identifying what is real and what is fabricated becomes increasingly complex. In such an environment, awareness and discernment play an important role in protecting individuals and public discourse from deception.

Spiritual teachings often emphasise the importance of truth and clarity in human life. Tatvdarshi Sant Rampal Ji Maharaj explains that true spiritual knowledge enables individuals to recognise reality and avoid deception in different forms. According to His teachings, understanding truth through genuine spiritual knowledge helps people remain aware and make informed decisions in a world where misinformation and illusion can easily spread.

As conversations around artificial intelligence, identity protection, and misinformation continue globally, the idea of seeking truth, both in technology and in life, remains relevant for society.

For more information visit our

Website: www.jagatgururampalji.org

YouTube: Sant Rampal Ji Maharaj

Facebook: Spiritual Leader Saint Rampal Ji

X (Twitter): @SaintRampalJiM

FAQs on YouTube Deepfake Detection Tool Expansion

1. What is YouTube’s deepfake detection tool?

It is a likeness detection system that scans videos for AI-generated faces resembling real individuals and allows verified users to request removal.

2. Who can use the tool?

Government officials, journalists and political candidates are part of the pilot program for the deepfake detection tool.

3. Does detection automatically remove a video?

No. YouTube reviews each request under its privacy policy before deciding whether to remove the content.

4. How do participants verify their identity?

Participants must submit a government-issued ID and a selfie or video for identity verification.

5. Does the system detect voice impersonation?

Currently it focuses on facial likeness, but YouTube said it is exploring voice impersonation detection.