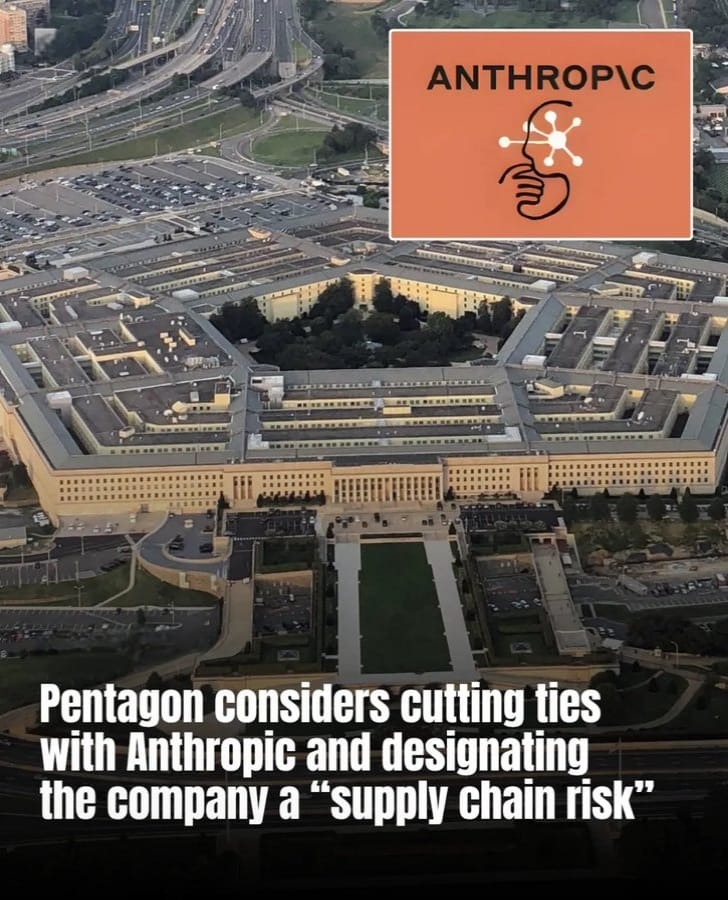

A major dispute has emerged between the United States Department of Defense (Pentagon) and leading artificial intelligence firm Anthropic, intensifying the growing debate over how AI technology should be used in military operations.

- Pentagon Flags Anthropic as Supply Chain Risk: Key Highlights

- Pentagon’s Decision Raises Questions About AI Use in Defense

- Dispute Triggered by AI Safety Restrictions

- Anthropic Prepares Legal Challenge

- Defense Contractors Adjusting to the Directive

- Critics Warn of Risks to U.S. Innovation

- Competition in the AI Sector Intensifies

- Public Reaction Boosts Anthropic’s Popularity

- Understanding the “Supply Chain Risk” Designation

- What This Means for the Future of AI Governance

- Pentagon’s Anthropic Ban Sparks Global Debate on AI Control

In an unusual and controversial move, the Pentagon has formally categorized Anthropic as a “supply chain risk.” The designation could restrict defense contractors from using the company’s popular AI assistant Claude AI in projects linked to U.S. military systems.

The development has triggered widespread discussion across the technology sector, with experts questioning whether governments should have unrestricted control over AI capabilities or whether private companies should maintain ethical limits on how their technologies are deployed.

Pentagon Flags Anthropic as Supply Chain Risk: Key Highlights

- U.S. Pentagon Labels Anthropic a Supply Chain Risk, Impacting Military AI Use

- Pentagon–Anthropic Clash Deepens Over AI Safety Limits in Military Use

- Anthropic Prepares Legal Battle After Pentagon Labels AI Firm a Supply Chain Risk

- Pentagon Directive Forces Defense Giants to Rethink Anthropic AI Usage

- Critics Warn Pentagon’s Anthropic Ban Could Hurt U.S. AI Innovation

- Pentagon’s Anthropic Ban Sparks Debate as OpenAI Gains Edge in Defense AI Race

- Public Backlash Drives Surge in Anthropic’s Claude AI Popularity Worldwide

- Inside the Pentagon’s “Supply Chain Risk” Warning for Technology Firms

- Future of AI Governance: Pentagon-Anthropic Clash Raises Global Questions

- Pentagon-Anthropic Dispute Signals New Era of AI Governance

Pentagon’s Decision Raises Questions About AI Use in Defense

The Pentagon confirmed that Anthropic and its AI products have been officially marked as a supply chain risk, a classification that could limit the company’s involvement in future defense-related contracts.

Under this designation, U.S. government agencies and contractors working on military systems may be restricted from integrating Anthropic’s AI tools into sensitive defense technologies.

Officials argue that the decision is necessary to ensure the military can deploy advanced technologies without operational restrictions imposed by private vendors.

Defense leaders have stated that any limitations placed on the military’s use of AI could potentially affect national security and battlefield readiness, especially as artificial intelligence becomes increasingly important in defense planning, cybersecurity, intelligence analysis, and logistics.

Dispute Triggered by AI Safety Restrictions

The conflict reportedly began after Anthropic declined requests to remove certain ethical safeguards embedded within its AI model Claude. These safety measures are designed to prevent the AI system from being used for controversial or potentially harmful activities, including:

- Mass surveillance targeting domestic population

- Development or deployment of fully autonomous weapons without human oversight

Anthropic’s leadership has emphasized that these guardrails are a core part of the company’s mission to ensure responsible AI development. CEO Dario Amodei has previously stated that advanced AI systems must include strict ethical frameworks to prevent misuse, particularly in areas that could affect civil liberties or escalate military conflicts.

However, defense officials argue that such restrictions could limit how the armed forces use emerging technologies during critical operations.

Anthropic Prepares Legal Challenge

Anthropic has strongly pushed back against the Pentagon’s classification and is preparing to challenge the decision through legal channels. Company representatives say the designation is unprecedented for a U.S.-based technology firm, noting that supply chain risk labels have historically been applied to organizations connected with foreign adversaries rather than domestic AI developers.

The company also clarified that the designation primarily affects Pentagon-related projects, meaning most commercial customers using Claude in business, research, and consumer applications are unlikely to experience disruptions. Legal experts believe the dispute could evolve into a high-profile court battle, potentially shaping how governments regulate artificial intelligence companies in the future.

Defense Contractors Adjusting to the Directive

The Pentagon’s announcement has already prompted responses from major defense contractors.

- Lockheed Martin Evaluating Alternatives

Defense giant Lockheed Martin confirmed that it will comply with government directives and begin assessing alternative AI providers for projects involving defense technologies. The company indicated that its systems are designed to operate with multiple AI platforms, meaning the shift away from Anthropic tools may not significantly disrupt ongoing operations.

- Microsoft Maintains Non-Military Collaboration

Technology company Microsoft noted that its partnerships with Anthropic could continue in areas unrelated to defense contracts. This means that while military-related integrations may be restricted, collaboration in commercial cloud computing and enterprise AI services may remain unaffected.

Critics Warn of Risks to U.S. Innovation

The Pentagon’s decision has sparked criticism from several policymakers and technology experts.

Some analysts argue that applying a supply chain risk label to an American AI company could discourage innovation and investment in the U.S. artificial intelligence sector.

Others believe the move may create tension between government institutions and technology developers, especially as private firms increasingly lead the development of cutting-edge AI systems. Critics also warn that limiting collaboration with companies like Anthropic could slow the military’s access to advanced AI capabilities that are being rapidly developed in the private sector.

Competition in the AI Sector Intensifies

The controversy has also highlighted the fierce competition among leading AI developers.

Reports suggest that OpenAI may expand its cooperation with the U.S. government to provide AI tools for secure or classified environments. OpenAI’s chatbot ChatGPT is already widely used across industries and could potentially fill gaps created by restrictions on Anthropic technology.

The shift underscores the strategic importance of AI providers as governments worldwide race to integrate artificial intelligence into national defense systems.

Public Reaction Boosts Anthropic’s Popularity

Despite the controversy, Anthropic has experienced a surge in public attention and user growth.

According to company statements, millions of new users began exploring Claude during the period of the dispute, with the application climbing app store rankings across multiple countries.

Supporters of the company have praised its commitment to maintaining ethical safeguards in AI development, particularly regarding issues like surveillance and autonomous weapons.

The episode has sparked broader discussions about how technology companies should balance innovation, responsibility, and government demands.

Understanding the “Supply Chain Risk” Designation

In U.S. national security policy, a supply chain risk designation typically refers to technology providers whose products could pose threats such as:

- Potential system vulnerabilities

- Security risks in critical infrastructure

- Foreign influence or espionage concerns

When applied, the designation can lead to significant consequences, including restrictions on federal procurement and limitations on defense contracts. Applying such a label to a domestic AI developer, however, is considered highly unusual, which is why the decision has drawn significant scrutiny from legal and technology communities.

What This Means for the Future of AI Governance

The standoff between the Pentagon and Anthropic highlights a deeper challenge facing the global technology ecosystem. As artificial intelligence becomes central to national security strategies, governments and private companies must navigate difficult questions, including:

- Who ultimately controls and how AI systems are used?

- Should companies be allowed to impose ethical restrictions on powerful technologies?

- How can innovation continue while ensuring responsible deployment?

Experts say the outcome of the legal and policy battles surrounding Anthropic could influence international AI governance frameworks and shape how future technologies are regulated.

Pentagon’s Anthropic Ban Sparks Global Debate on AI Control

The Pentagon’s decision to classify Anthropic as a supply chain risk represents one of the most significant confrontations yet between the U.S. government and a major AI developer. While defense officials argue that unrestricted access to artificial intelligence tools is essential for national security, Anthropic maintains that ethical safeguards are necessary to prevent dangerous misuse of powerful technologies.

As legal challenges unfold and AI competition intensifies, the dispute may ultimately determine how governments and technology companies cooperate-or clash-in shaping the future of artificial intelligence.